Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

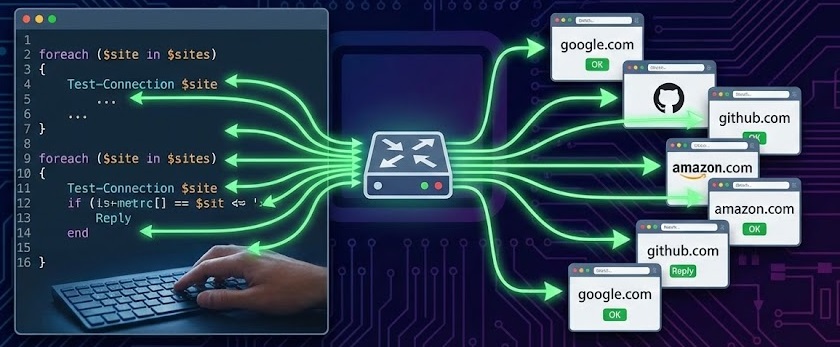

Ever found yourself needing to check if several websites are online, only to realize you’d have to ping each one individually? That’s tedious, especially if you’re monitoring dozens of sites. The good news is there’s a better way—and it’s surprisingly straightforward.

Whether you’re a system administrator keeping tabs on your company’s web services, a developer testing API endpoints, or just someone who wants to monitor their favorite websites, learning to ping multiple sites simultaneously can save you hours of repetitive work.

In this guide, we’ll explore practical solutions for both Windows and Linux users, complete with ready-to-use scripts that you can customize for your specific needs.

Before diving into the technical details, let’s understand why this matters.

Traditional ping commands are designed to check one host at a time. If you need to monitor 20 websites, you’d typically run 20 separate commands. That’s not just time-consuming—it’s inefficient and makes it difficult to see patterns or compare response times at a glance.

Simultaneous pinging allows you to:

If you’re on Linux or macOS, Bash scripting offers a lightweight, no-installation-required solution.

Here’s a basic script that pings multiple websites in the background:

bash

#!/bin/bash

# Check if arguments were provided

if [ $# -eq 0 ]; then

echo "Usage: $0 <hostname or IP address> ..."

exit 1

fi

# Loop through each argument and ping it in the background

for host in "$@"

do

ping -c 4 "$host" &

done

# Wait for all background processes to complete

wait

echo "All pings completed!"How to use it:

ping_multiple.shchmod +x ping_multiple.sh./ping_multiple.sh google.com yahoo.com github.comThe script uses the & symbol to run each ping command in the background, allowing them to execute simultaneously rather than sequentially.

For a more informative output, here’s an improved version:

bash

#!/bin/bash

# Array of websites to ping

websites=(

"google.com"

"github.com"

"stackoverflow.com"

"reddit.com"

"amazon.com"

)

echo "Starting ping tests..."

echo "===================="

# Ping each website

for site in "${websites[@]}"

do

if ping -c 1 -W 2 "$site" > /dev/null 2>&1; then

echo "✓ $site is ONLINE"

else

echo "✗ $site is OFFLINE or unreachable"

fi &

done

# Wait for all background jobs

wait

echo "===================="

echo "Ping tests completed!"This version provides clear visual feedback with checkmarks and crosses, making it easy to see which sites are up or down at a glance.

For Linux users who want more power and flexibility, fping is the go-to tool. Unlike the standard ping command, fping is specifically designed to ping multiple hosts efficiently.

On Ubuntu/Debian:

bash

sudo apt-get install fpingOn CentOS/RHEL:

bash

sudo yum install fpingOn macOS:

bash

brew install fpingPing multiple websites directly from the command line:

bash

fping google.com yahoo.com github.com stackoverflow.comOutput example:

google.com is alive

yahoo.com is alive

github.com is alive

stackoverflow.com is aliveFor monitoring many sites, create a text file with your URLs:

bash

# Create websites.txt

echo "google.com" > websites.txt

echo "github.com" >> websites.txt

echo "stackoverflow.com" >> websites.txt

echo "reddit.com" >> websites.txt

echo "amazon.com" >> websites.txtThen ping them all:

bash

fping < websites.txtGet detailed statistics including response times:

bash

fping -s -e google.com github.com stackoverflow.comThis shows:

Python offers the most flexibility and works on Windows, Linux, and macOS. Here’s a robust solution that includes error handling and logging.

python

import subprocess

import platform

from datetime import datetime

# List of websites to ping

websites = [

"google.com",

"github.com",

"stackoverflow.com",

"reddit.com",

"amazon.com"

]

def ping_website(host):

"""

Ping a website and return True if successful, False otherwise

"""

# Determine ping parameters based on OS

param = '-n' if platform.system().lower() == 'windows' else '-c'

# Build ping command

command = ['ping', param, '1', host]

try:

# Execute ping

output = subprocess.run(

command,

stdout=subprocess.PIPE,

stderr=subprocess.PIPE,

timeout=5

)

return output.returncode == 0

except subprocess.TimeoutExpired:

return False

def main():

print(f"Ping Test Started: {datetime.now().strftime('%Y-%m-%d %H:%M:%S')}")

print("=" * 50)

results = {}

for website in websites:

status = ping_website(website)

results[website] = status

if status:

print(f"✓ {website:30} ONLINE")

else:

print(f"✗ {website:30} OFFLINE")

print("=" * 50)

# Summary

online = sum(results.values())

total = len(results)

print(f"\nSummary: {online}/{total} websites are online")

if __name__ == "__main__":

main()To run this script:

ping_checker.pypython ping_checker.pyFor faster execution when checking many websites, use threading:

python

import subprocess

import platform

import threading

from datetime import datetime

from queue import Queue

websites = [

"google.com",

"github.com",

"stackoverflow.com",

"reddit.com",

"amazon.com",

"twitter.com",

"facebook.com",

"linkedin.com"

]

results = {}

results_lock = threading.Lock()

def ping_website(host):

"""Ping a website and store result"""

param = '-n' if platform.system().lower() == 'windows' else '-c'

command = ['ping', param, '1', '-w', '2000', host]

try:

output = subprocess.run(

command,

stdout=subprocess.PIPE,

stderr=subprocess.PIPE,

timeout=3

)

status = output.returncode == 0

except:

status = False

with results_lock:

results[host] = status

def worker(queue):

"""Worker thread to process ping tasks"""

while True:

host = queue.get()

if host is None:

break

ping_website(host)

queue.task_done()

def main():

print(f"Multi-threaded Ping Test: {datetime.now().strftime('%Y-%m-%d %H:%M:%S')}")

print("=" * 60)

# Create queue and threads

queue = Queue()

threads = []

# Start worker threads

for _ in range(min(10, len(websites))):

t = threading.Thread(target=worker, args=(queue,))

t.start()

threads.append(t)

# Queue all websites

for website in websites:

queue.put(website)

# Wait for completion

queue.join()

# Stop workers

for _ in threads:

queue.put(None)

for t in threads:

t.join()

# Display results

for website in sorted(results.keys()):

status = results[website]

symbol = "✓" if status else "✗"

state = "ONLINE" if status else "OFFLINE"

print(f"{symbol} {website:35} {state}")

print("=" * 60)

online = sum(results.values())

print(f"Summary: {online}/{len(results)} websites online")

if __name__ == "__main__":

main()This threaded version can check dozens of websites in just a few seconds by running multiple pings simultaneously.

Windows users can leverage PowerShell for a native solution that doesn’t require installing additional tools.

powershell

# List of websites to ping

$websites = @(

"google.com",

"github.com",

"stackoverflow.com",

"reddit.com",

"amazon.com"

)

Write-Host "`nPing Test Started: $(Get-Date -Format 'yyyy-MM-dd HH:mm:ss')" -ForegroundColor Cyan

Write-Host ("=" * 60)

foreach ($site in $websites) {

$ping = Test-Connection -ComputerName $site -Count 1 -Quiet

if ($ping) {

Write-Host "✓ $site" -ForegroundColor Green -NoNewline

Write-Host " is ONLINE"

} else {

Write-Host "✗ $site" -ForegroundColor Red -NoNewline

Write-Host " is OFFLINE"

}

}

Write-Host ("=" * 60)To run:

ping_websites.ps1.\ping_websites.ps1For faster results, use PowerShell’s parallel capabilities (PowerShell 7+):

powershell

$websites = @(

"google.com",

"github.com",

"stackoverflow.com",

"reddit.com",

"amazon.com"

)

Write-Host "`nParallel Ping Test Started" -ForegroundColor Cyan

$results = $websites | ForEach-Object -Parallel {

$site = $_

$ping = Test-Connection -ComputerName $site -Count 1 -Quiet -TimeoutSeconds 2

[PSCustomObject]@{

Website = $site

Status = $ping

}

} -ThrottleLimit 10

# Display results

$results | ForEach-Object {

if ($_.Status) {

Write-Host "✓ $($_.Website)" -ForegroundColor Green

} else {

Write-Host "✗ $($_.Website)" -ForegroundColor Red

}

}

$online = ($results | Where-Object { $_.Status }).Count

Write-Host "`nSummary: $online/$($websites.Count) websites online"For continuous monitoring, you’ll want to log results and potentially create alerts. Here’s a Python script that writes results to a file:

python

import subprocess

import platform

import threading

from datetime import datetime

from queue import Queue

import csv

websites = [

"google.com",

"github.com",

"stackoverflow.com",

"reddit.com"

]

results = {}

results_lock = threading.Lock()

def ping_website(host):

"""Ping a website and return response time if successful"""

param = '-n' if platform.system().lower() == 'windows' else '-c'

command = ['ping', param, '1', host]

try:

output = subprocess.run(

command,

stdout=subprocess.PIPE,

stderr=subprocess.PIPE,

timeout=3,

text=True

)

if output.returncode == 0:

# Parse response time (simplified)

return "Online"

else:

return "Offline"

except:

return "Timeout"

def worker(queue):

while True:

host = queue.get()

if host is None:

break

status = ping_website(host)

with results_lock:

results[host] = status

queue.task_done()

def save_to_csv(filename="ping_log.csv"):

"""Save results to CSV file"""

timestamp = datetime.now().strftime('%Y-%m-%d %H:%M:%S')

file_exists = False

try:

with open(filename, 'r'):

file_exists = True

except FileNotFoundError:

pass

with open(filename, 'a', newline='') as csvfile:

fieldnames = ['Timestamp'] + list(results.keys())

writer = csv.DictWriter(csvfile, fieldnames=fieldnames)

if not file_exists:

writer.writeheader()

row = {'Timestamp': timestamp}

row.update(results)

writer.writerow(row)

def main():

queue = Queue()

threads = []

# Start workers

for _ in range(min(5, len(websites))):

t = threading.Thread(target=worker, args=(queue,))

t.start()

threads.append(t)

# Queue websites

for website in websites:

queue.put(website)

queue.join()

# Stop workers

for _ in threads:

queue.put(None)

for t in threads:

t.join()

# Display and save results

print(f"Check completed at: {datetime.now().strftime('%Y-%m-%d %H:%M:%S')}")

for site, status in results.items():

print(f"{site}: {status}")

save_to_csv()

print("\nResults saved to ping_log.csv")

if __name__ == "__main__":

main()This script creates a CSV log file that you can analyze later or import into spreadsheet software for trend analysis.

To run your script every 5 minutes, add to crontab:

bash

# Edit crontab

crontab -e

# Add this line (adjust path to your script)

*/5 * * * * /usr/bin/python3 /path/to/your/ping_checker.py >> /var/log/ping_check.log 2>&1Choose the Right Timeout: Set timeouts appropriate for your use case. Web servers might respond in milliseconds, while remote servers might take longer.

Limit Concurrent Pings: Don’t ping too many hosts simultaneously. Most systems handle 10-20 concurrent pings well, but hundreds might cause issues.

Handle Failures Gracefully: Always implement error handling. Networks are unreliable, and your script should handle timeouts and failures without crashing.

Consider Rate Limiting: Some hosts might block you if you ping too frequently. For continuous monitoring, 1-5 minute intervals are usually reasonable.

Use Appropriate Tools: For simple checks, bash scripts work fine. For production monitoring, consider dedicated tools like fping or Nagios.

Log Everything: Keep historical data to identify patterns and trends in connectivity or performance issues.

Pinging multiple websites simultaneously doesn’t have to be complicated. Whether you choose a simple Bash script, leverage the power of fping, or create a sophisticated Python monitoring tool, you now have the knowledge to monitor multiple endpoints efficiently.

Start with the basic scripts provided here, then customize them for your specific needs. Add features like email alerts, Slack notifications, or graphical dashboards as your requirements grow.